What do we know about the tail of the curve?

- Richard Dixon

- Mar 1, 2017

- 5 min read

Much of how we underwrite and model nowadays is done under the watchful eye (some I'm sure might say handcuffs) of the regulator. Whether you like it or not, there is obvious prudence inherent in the guidelines to ensure companies are capitalised to withstand that 1-in-200 year event or year when it does arrive. But how much do we know about the sort of events that make up the tail?

US Hurricane, eh? Boooring! Been there done that. It's a mature peril. Old hat. Many other new, exciting things occupy our minds at the moment whether it's cyber, the growing private flood insurance market or the thoughts towards opening up of insurance markets in emerging territories.

However the example I'm going to show is hopefully enlightening, even for one of our best-understood perils. It talks to the idea that we might know a little less than we may like to think about the type of event that inhabits the tail of the curve. For this I am very grateful for the help of Frank Lavelle at Applied Research Associates (ARA) and Joh Carter at OASIS who host their model. They have allowed me to use some results to demonstrate an interesting point. I'm sure you will be able to replicate with your own vendor model(s) of choice; indeed I have done for one of the vendors in the past and produced a similar message to the one you'll see below.

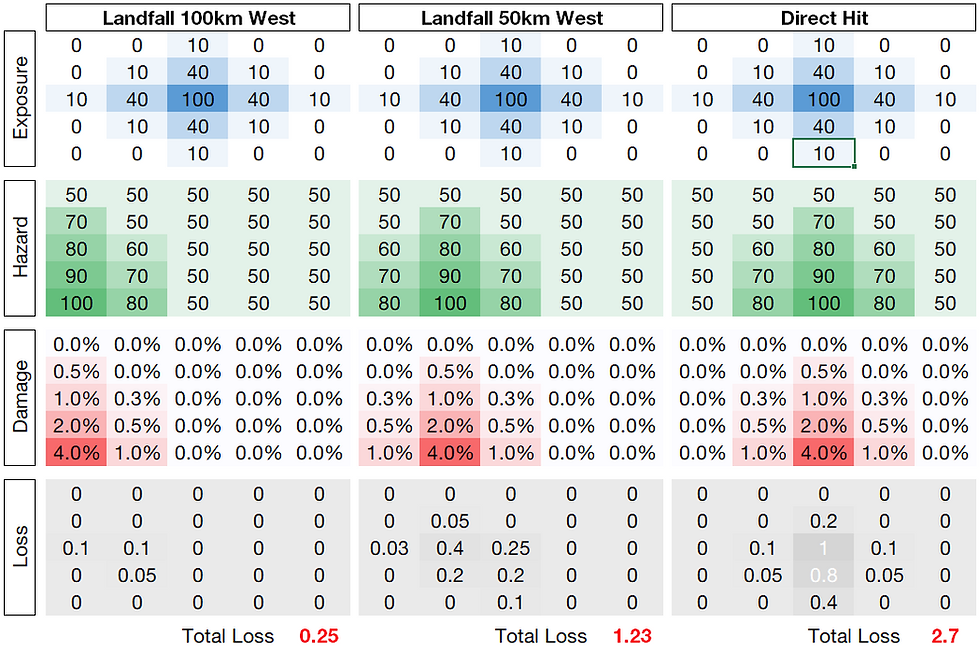

What I've done is taken each stochastic event in their model and placed this into a loss return period "bucket". The purpose of this is to understand what sort of windspeeds make the most significant contribution to loss in a few return period bands. The portfolio being used here is a Florida portfolio that has a sensible distribution of exposure within the state.

The chart below shows the proportion of loss that comes from each windspeed band in three different return period bands: 5-10 years, 30-50 years and 200-300 years. Each return period band data is made up of 20 different randomly-picked storms.

Naturally, the shorter return periods as you might expect are dominated by the lower windspeeds and as the return period of loss increases, so the wind speeds contributing to greatest loss shift from lower to the higher wind speeds.

The 200-300 year return period loss band is an interesting one here as naturally this is the return period band up to which companies are expected to be solvent. We can see that the majority of losses in this return period band - at least those generated from the ARA model - come from wind speeds above around 120 mph. As you might expect at this sort of return period, the type of events we're seeing here are Cat 4s and Cat 5s hitting major conurbations so high winds are the order of the day here.

But this throws up quite an important question: how much do we really know about 120+ mph wind speeds and the damage they cause?

Modern catastrophe models that used to rely on the engineering standards have now been validated heavily against loss data that recent hurricane seasons have provided us. So what sort of wind speeds have we seen in recent history for which we might have got loss data to help bolster our understanding and confidence in the vulnerability curves we use?

I have taken the liberty here of assuming that typically we've started to get useful location-level loss data from around 1999 onward, the season when Hurricane Floyd made landfall. Again, using the ARA model here, I have counted the number zips affected (excluding Irene and Sandy in this example) as a proxy for useful potential wind claims. From this we can work out what sort of wind speeds are most frequent in recent history.

The chart below simple shows the percentage of zipcodes impacted by each windspeed band. This highlights that about 0.5% of recent windspeed data [amounting to around 50 zipcodes] has been above the 120 mph (arbitrary) threshold I chose above - that is responsible for so much of the damage in the 200-300 year return period band.

Essentially: there is a bit of a disconnect here: for those windspeeds so important to tail of the curve, we have precious little data. We really don't know much about building response in the tail of the curve when it comes to validating our models against loss data.

In doing this study, one thing jumped out at me. This lack of information on damage above 120 mph is a prompt to understand more on the engineering side about building failure rather than reliance on loss data to inform the vulnerability curve. This is nothing new: catastrophe models in the 1990s relied solely on engineering studies and judgement corroborated in some cases by heavily aggregated loss data. However I can't help but feel that we've become rather in thrall to location-level loss data as our means of building vulnerability curves - as well as "proving" models are fit for purpose in that they match loss data, which itself is by no means perfect. This topic also links back to the topic I touched upon in my last blog post around how hazard and vulnerability can become rather inextricably linked when using loss data in building catastrophe models.

No, this isn't a sales pitch, but this study brought sharply into focus for me the companies such as ARA that offer a different view. With their vulnerability based largely on engineering principles in an era when other companies may have maybe concentrated more on loss data, this type of model may be more valuable to the user than they might imagine. Playing devil's advocate, given how much loss data is used to validate some models, are we simply seeing models built to match the same sets of loss data?

Also, this opens the door to academics, through open-source modelling, to be able to share their view on building performance in high winds to help us obtain more views on vulnerability at high wind speeds that dominate damage in the tail of the curve, and build our own views of risk around this. It's this area that really excites me in catastrophe modelling at the moment: unlocking academic studies, published - or indeed unpublished - that may be narrow in their scope, but could contain very useful alternative views of hazard and vulnerability.

If I were in charge of understanding cat risk in a re/insurer now, I'd be looking to use one model that is built off loss data and one that is built largely using engineering principles to get a feel for potential uncertainties in the tail of the curve.

Comments