Future Climate Risk: Are We Over-Simplifying Things?

- Richard Dixon

- Nov 22, 2021

- 3 min read

Climate risk and its assessment - whether it's working out if present-day risk presented by catastrophe models has a miss-factor or estimating that of the future - seems to be taking up more and more of our time: whether you like it or not!

I've been having a think about the various stress tests that down the line some or all of us will be asked to do and I wanted to share a really, really simple example that expresses a concern of mine. I sense we're doing what we did with secondary uncertainty all those years ago - boiling down a really uncertain, immature area of science, just to give us a simple number to help us understand future risk: but does it?

So, a simple example.

Hi, I'm Richard Dixon! I'm the CEO of Dixon Insurance (catchy name, yeah?) and I insure a single building on the Florida coastline. It's a niche affair, yes, I know. I've been asked to understand how climate risk might affect the 1-in-100 year impacts on my huge portfolio of one building by 2050.

I've been asked to stress-test this by uplifting the hazard intensity by 3% in keeping with some of the broad guidelines from research papers, where we expect a mean increase of hazard of 3%.

My local friendly catastrophe model tells me my present-day 1-in-100 year wind speed is 115 mph. 3% increase in hazard gives me 118.45 mph, if we're going to have delusional exactitude to two decimal places.

Looking things up on a typical damage curve (pilfered from this paper), we can see our expected damage to the building goes from 5.0% to 6.1% - an increase of around 21%.

For such a small change in hazard, an increase of 21% damage potential is pretty noteworthy and worth knowing. Job done.

Or is it?

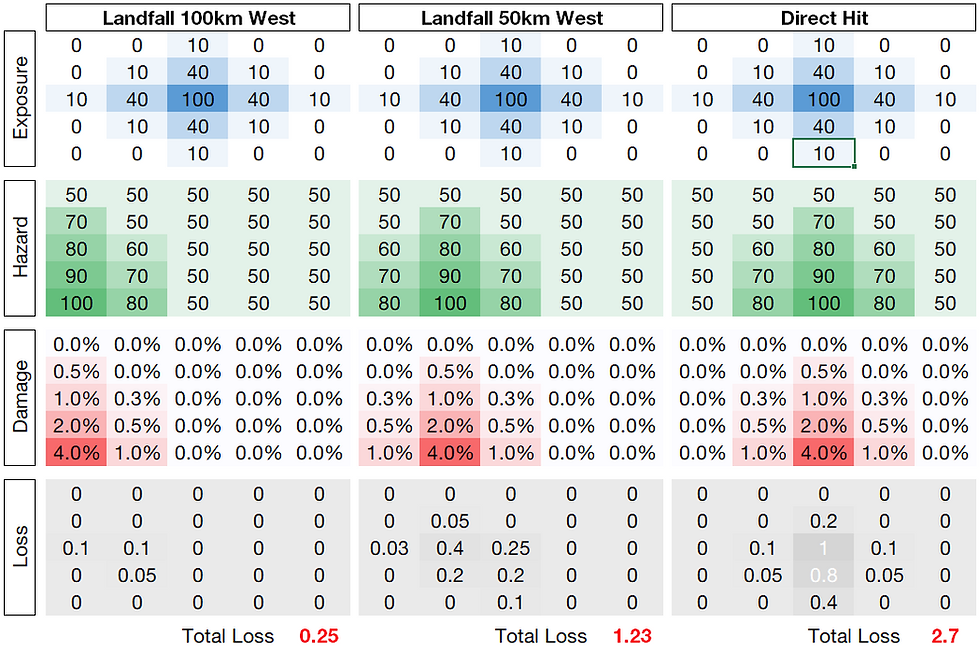

You see, it's a one-man operation, Dixon Insurance, so I'm also Chief Research Officer and I'm an inquisitive bugger. So I wanted to take a look at the various models from whence the 3% increase in hazard came. The table below shows how we might get to that number from the climate models available to us:

And we can see that our 3% increase in wind speed comes from an array of models whose wind, in some cases is much higher than our 115 mph current 100-year wind speed, and some that are actually even lower. There is a range of outcomes - and the mean of these hazard values gives us that 3% increase to 118.45 mph that we can use to read off 6.1% off the damage curve.

But something doesn't smell right here. Why are we averaging the hazard to use for guidelines? Shouldn't we be averaging the damage from the underlying models here? What does that look like?

Well actually: quite different. That 6.1% damage ratio that we got by using the mean hazard actually increases to 6.7% when we convert the hazard to damage in each model and average it, rather than averaging the hazard and then reading off the damage value. This is a 50% increase in the future damage potential over and above our original estimate.

And let's say we looked at a different set of 8 climate models, with a greater range of outcomes but again, just like before, a similar mean increase in hazard:

In this case, those models with a larger increase in future hazard - because of the shape of the damage curve - are skewing our losses even higher in this case when we take the average of the various models' damage. 56% rather than 21% is really quite a marked difference. We can only get this 33% or 56% by assessing the underlying climate models, otherwise we could be fooling ourselves.

Now, I appreciate this is a very crude example, for a single location rather than a portfolio and all its spatial correlations: but it serves a purpose here. And so I end this short post rather grumpily:

We cannot understand impacts of climate models until we actually understand how each model impacts our book of business. Attempting to boil down and simplify output to get to "the number" for internal reporting or regulators might actually end up misleading us.

Addendum: the thoughts here are mine and not of my employer.

Comments